Finding the Goldilocks Density: How CoD Prompting Gets Summaries Just Right

Recent advances in AI summarisation are largely thanks to the rise of large language models (LLMs) like GPT-3 and GPT-4. Rather than training on labeled datasets, these models can generate summaries with just the right prompts. This allows for precise control over summary length, topics covered, and style. An important but overlooked aspect is information density - how much detail to include within a constrained length. The goal is a summary that is informative yet clear. Striking this balance is challenging.

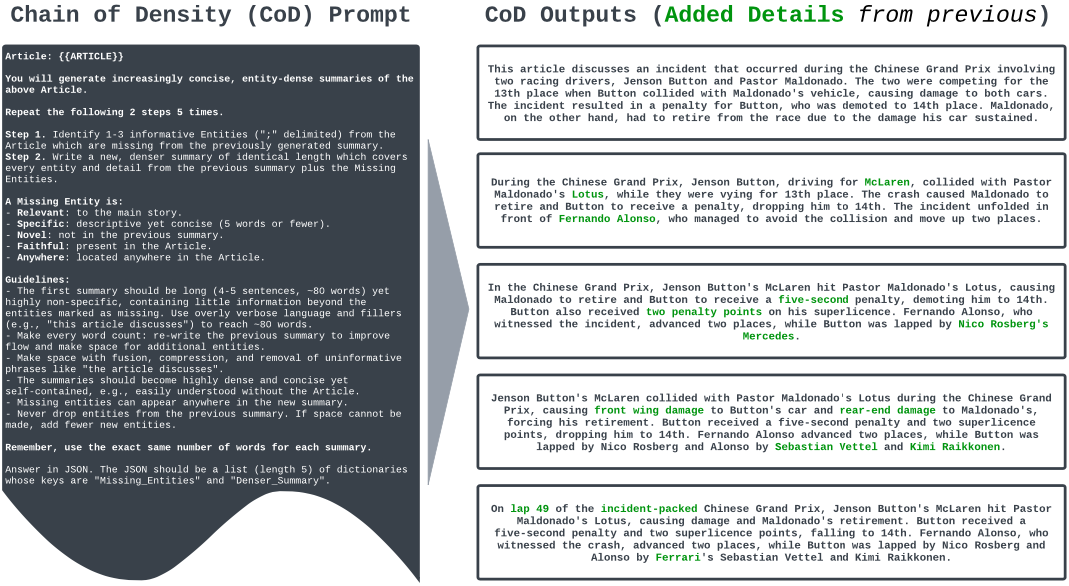

A new technique called Chain of Density (CoD) prompting helps address this tradeoff. Recently published research explains the approach and provides insights based on human evaluation.

Overview of Chain of Density Prompting:

The CoD method works by incrementally increasing the entity density of GPT-4 summaries without changing length. First, GPT-4 generates an initial sparse summary focused on just 1-3 entities. Then over several iterations, it identifies missing salient entities from the source text and fuses them into the summary.

Source: Griffin Adams, Alexander Fabbri, Faisal Ladhak, Eric Lehman, Noémie Elhadad (2023). From Sparse to Dense: GPT-4 Summarisation with Chain of Density Prompting.

To make room, GPT-4 is prompted to abstract, compress content, and merge entities. Each resulting summary contains more entities per token than the last. The researchers generate 5 rounds of densification for 100 CNN/Daily Mail articles.

Key Findings:

- Humans preferred CoD summaries with densities close to human-written ones over sparse GPT-4 summaries from vanilla prompts.

- CoD summaries became more abstract, fused content more, and reduced bias toward early text over iterations.

- There was a peak density beyond which coherence declined due to awkward fusions of entities.

- An entity density of ~0.15 was ideal, vs 0.122 for vanilla GPT-4 and 0.151 for human summaries.

Contributions:

The researchers introduced the CoD prompting strategy and thoroughly evaluated the impact of densification. They provided key insights into balancing informativeness and clarity. The team also open-sourced annotated data and 5,000 unannotated CoD summaries to enable further research.

Conclusion:

This study highlights the importance of achieving the right level of density in automated summarisation. Neither overly sparse nor dense summaries are optimal. The CoD technique paired with human evaluation offers a promising path toward readable yet informative AI-generated summaries.

Key takeaways:

- Ask for multiple summaries of increasing detail. Start with a short 1-2 sentence summary, then ask for a slightly more detailed version, and keep iterating until you get the right balance of conciseness and completeness for your needs.

- When asking ChatGPT to summarise something lengthy like an article or report, specify that you want an "informative yet readable" summary. This signals the ideal density based on the research.

- Pay attention to awkward phrasing, strange entity combinations, or unconnected facts when reading AI summaries. These are signs it may be too dense and compressed. Request a less dense version.

- For complex topics, don't expect chatbots to convey every detail in a highly compressed summary - there are limits before coherence suffers. Ask for a slightly longer summary if needed.

- Remember that for optimal clarity and usefulness, AI summaries should have a similar density to those written by humans. Extreme brevity may mean missing key details.

The core takeaway is that density impacts the quality and usefulness of AI summarisation. As an end user, being aware of this can help you prompt for and identify the "goldilocks" level of density for your needs, avoiding summaries that are either frustratingly vague or confusingly overloaded. The Chain of Density research provides insights to guide this process.