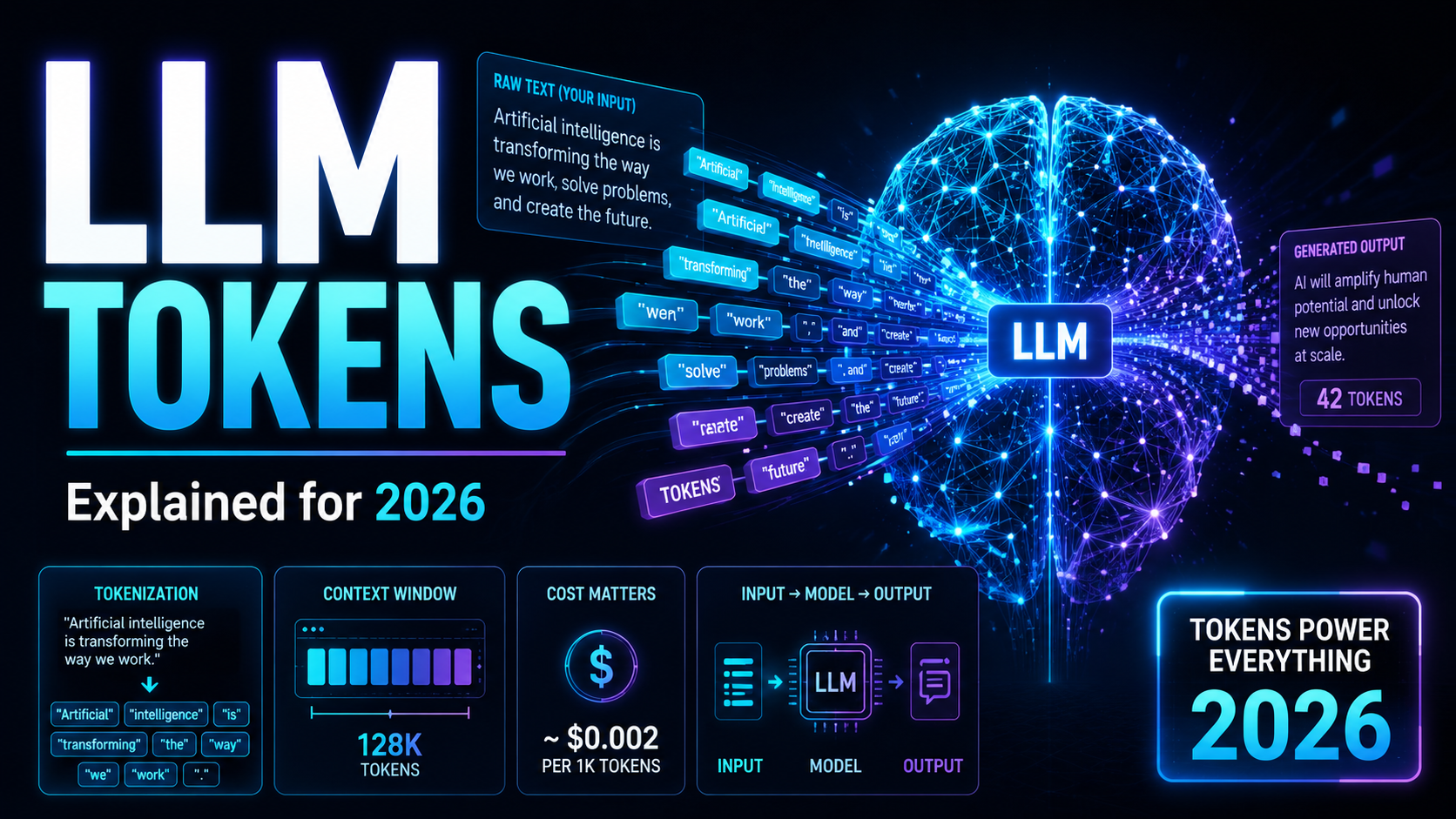

TL;DR — LLM tokens in 2026

- A token is the model’s context-and-billing unit, usually a subword fragment for text but increasingly applied to images, audio, video, files and tool schemas.

- The classic rule still works for plain English: ~4 characters or ~0.75 words per token. It is a heuristic, not a guarantee.

- Frontier context windows are now ~1M tokens across GPT-5.5, Claude 4.7 / Sonnet 4.6, Gemini 3.1 Pro and DeepSeek-V4.

- Pricing is now multi-bucket: input, cached input, output, and reasoning / thinking tokens are billed separately on most APIs.

- Tokenizer-version matters. Anthropic says Claude Opus 4.7 may use up to 35% more tokens on the same text than older models.

- Multimodal counts vary by provider: Gemini bills video at 263 tokens/sec and audio at 32 tokens/sec; Claude images run up to ~4,784 tokens on Opus 4.7.

- For accuracy, use provider-native counters. Local estimators miss tools, images, files and reasoning overhead.

What an LLM token actually is in 2026

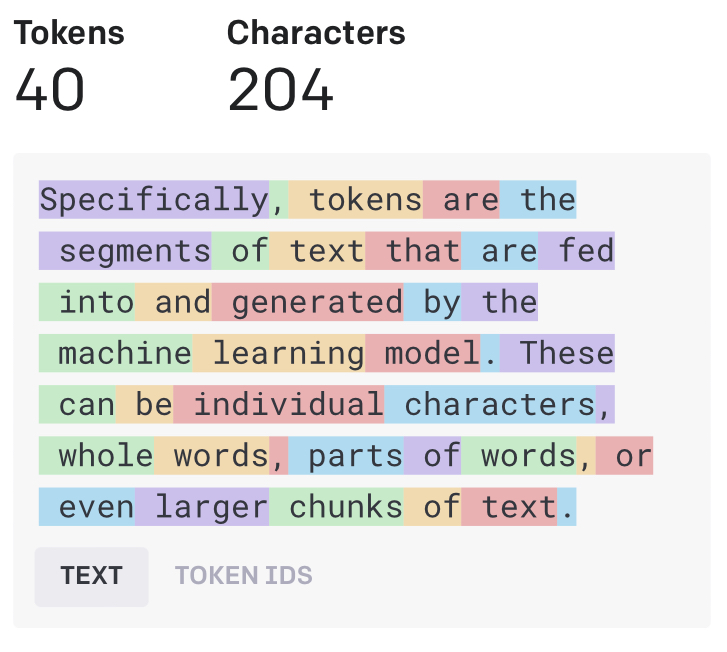

A token is best understood as a model-readable unit drawn from a vocabulary of recurring fragments. In practice a token might be a whole short word, part of a longer word, punctuation with surrounding whitespace, or a byte-derived fragment. OpenAI’s docs and the official tiktoken reference both emphasise that tokens are commonly occurring character sequences, not words in the human sense — a leading space, for example, is often attached to the following word, which is exactly why raw word counts and token counts diverge as soon as you measure them.

The familiar beginner rule still holds for plain English: OpenAI documents roughly 4 characters or 0.75 words per token, and Google documents roughly 4 characters and 60–80 English words per 100 tokens for Gemini. But both providers treat that as an approximation. DeepSeek goes further by quantifying language variance: its API docs estimate ~0.3 tokens per English character and ~0.6 tokens per Chinese character. Token counts depend on the tokenizer, the language, and the exact byte / character patterns in the input.

The most important conceptual upgrade for a 2026 audience is this: a token is now better described as the model’s fundamental accounting unit for context and billing, not merely its basic text “building block”. That broader definition matches how modern APIs expose usage metadata and pricing across text, non-text modalities, reasoning, and caches.

How tokenization works under the hood

Modern tokenization is, in practice, a subword problem. Whole-word vocabularies do a poor job with rare words, misspellings, morphology, names and multilingual text. Sennrich, Haddow and Birch’s 2015 BPE paper framed subwords as an open-vocabulary fix for translation: rare or unknown words can be encoded as sequences of smaller units instead of collapsing to <unk>.

Three families dominate production tokenizers:

- BPE (Byte Pair Encoding) — merges frequent symbol pairs into larger units. This is what powers OpenAI’s tokenizer and many open models.

- WordPiece — uses a longest-match-first strategy over a learned vocabulary. Famous from BERT.

- SentencePiece — trains subword models directly from raw sentences without assuming whitespace-delimited words. Critical for multilingual systems because it does not hard-code English-style preprocessing.

OpenAI’s tiktoken library is a useful production reference because its docs make the engineering tradeoffs explicit: BPE is reversible and lossless, works on arbitrary text, and compresses input so the token sequence is shorter than the original byte sequence — averaging about 4 bytes per token in practice. That is closer to how deployed LLM systems actually behave than “one token equals one word”.

How models use tokens after tokenization

After tokenization the model does not “see words”. It sees integer token IDs. Those IDs are mapped into vectors, positional information is added so token order matters, and transformer layers contextualise each token relative to the others through attention. This is the bridge between a string in your prompt and the internal numerical state the model actually manipulates. The original transformer paper made positional encoding necessary precisely because attention on its own does not encode order.

For decoder-style chat models, generation is autoregressive: the model predicts one token, appends it to the sequence, then predicts the next, repeating until it hits a stop condition or output limit. That iterative next-token loop is why token budgets matter twice — once for the input context, and again for the output the model is allowed to generate.

Long context is also why so much frontier engineering is now about tokens. Million-token windows, prompt caching, context compression and sparse-attention claims are all responses to the cost of storing, attending over and serving very long token sequences. “How many tokens?” is still one of the most practical questions in LLM engineering, not just a beginner curiosity.

What changed in current frontier models

OpenAI — GPT-5.5

GPT-5.5 is listed with a 1,050,000-token context window and 128,000 max output tokens. Standard API pricing is $5 / M input tokens, $0.50 / M cached input tokens, and $30 / M output tokens. OpenAI now provides an exact input-token counting endpoint for the same payload format used in the Responses API, and the docs explicitly recommend it over character-based estimates whenever images, files, tools or model-specific behaviour are involved. OpenAI’s prompt caching is automatic for eligible long prompts, kicks in from 1,024 tokens upward, and the docs say it can reduce latency by up to 80% and input-token cost by up to 90%. Reasoning models use internal reasoning tokens, discard them from later turns, and bill them as output tokens; the GPT-5.5 migration guide says the model reaches strong results with fewer reasoning tokens than prior generations.

Anthropic — Claude Opus 4.7 & Claude Sonnet 4.6

Claude Opus 4.7 and Claude Sonnet 4.6 both offer 1M-token context windows; Opus 4.7 has a 128k max output, Sonnet 4.6 has 64k. Anthropic prices Opus 4.7 at $5 / M input and $25 / M output, and Sonnet 4.6 at $3 / M input and $15 / M output. Anthropic’s most important token-specific 2026 change is unusually explicit: the company says Opus 4.7 uses a new tokenizer that may consume up to 35% more tokens for the same fixed text. That is a concrete example of why a token-focused blog in 2026 should treat token counts as model-version-specific rather than timeless constants.

Anthropic’s docs are also unusually revealing about token overhead outside plain text:

- The token-counting API supports tools, images and PDFs.

- Counts may include system-added optimisation tokens that are not billed.

- Tool use carries documented system-prompt overhead.

- Image-token heuristic: roughly

width × height / 750. - Most Claude models cap native image resolution around 1,568 tokens per image; Opus 4.7 is the first Claude model with high-resolution image support up to roughly 4,784 tokens per image (~3× prior models).

- Thinking is token-budgeted: current thinking tokens count toward output usage, while previous thinking blocks are stripped from future context.

Google — Gemini 3.1 Pro Preview & Gemini 3 Flash Preview

Gemini 3.1 Pro Preview and Gemini 3 Flash Preview both expose 1,048,576 input tokens and 65,536 output tokens. Gemini 3.1 Pro Preview is priced at $2 / M input and $12 / M output for prompts under 200k tokens, rising to $4 / M input and $18 / M output above that threshold. Gemini 3 Flash Preview is listed at $0.50 / M input and $3 / M output.

Two Google design choices are worth flagging:

- Gemini 3 models use dynamic thinking by default, and output pricing explicitly includes thinking tokens.

- Gemini usage metadata now surfaces multiple token categories: input, output, thought, cached content, and tool-use tokens. Token usage on Gemini is a structured accounting object, not a single integer.

Google is also one of the clearest providers on multimodal tokenization. Per its docs, images at or below 384 px on both dimensions count as 258 tokens, larger images are tiled into 768×768 patches at 258 tokens each, video is counted at 263 tokens per second, and audio at 32 tokens per second. Caching now includes implicit and explicit modes, with explicit caches defaulting to a one-hour TTL. Note that Google retired Gemini 3 Pro Preview on 9 March 2026 in favour of Gemini 3.1 Pro Preview, so use the newer naming when you write integrations.

DeepSeek — DeepSeek-V4-Pro & DeepSeek-V4-Flash

DeepSeek-V4-Pro and DeepSeek-V4-Flash both list a 1M-token context window and up to 384k output tokens — notably larger on the output side than the mainstream OpenAI, Anthropic or Google figures. DeepSeek states that thinking mode is supported on both and is the default. Context caching is enabled by default, uses overlapping-prefix cache hits, and the pricing page separates cache-hit and cache-miss input rates aggressively. Legacy names deepseek-chat and deepseek-reasoner are being phased out and currently map to the non-thinking and thinking modes of V4 Flash until 24 July 2026.

2026 token-economics comparison table

Quick side-by-side of the four major frontier API families. Pricing is per million tokens unless noted, snapshot taken 9 May 2026 — always re-check the provider docs before going live.

| Model | Context window | Max output | Input price | Cached input | Output price | Reasoning / thinking |

|---|---|---|---|---|---|---|

| GPT-5.5 (OpenAI) | 1,050,000 | 128,000 | $5 / M | $0.50 / M | $30 / M | Reasoning tokens billed as output; auto-discarded from later turns |

| Claude Opus 4.7 (Anthropic) | 1,000,000 | 128,000 | $5 / M | (per Anthropic caching docs) | $25 / M | Thinking tokens count toward output; previous blocks stripped |

| Claude Sonnet 4.6 (Anthropic) | 1,000,000 | 64,000 | $3 / M | (per Anthropic caching docs) | $15 / M | Thinking supported; lighter compute footprint |

| Gemini 3.1 Pro Preview (Google) | 1,048,576 | 65,536 | $2 / M (<200k) · $4 / M (≥200k) | Implicit + explicit (1h TTL default) | $12 / M (<200k) · $18 / M (≥200k) | Dynamic thinking by default; thought tokens included in output price |

| Gemini 3 Flash Preview (Google) | 1,048,576 | 65,536 | $0.50 / M | Implicit + explicit (1h TTL default) | $3 / M | Dynamic thinking by default |

| DeepSeek-V4-Pro | 1,000,000 | 384,000 | Cache-hit / cache-miss split | Default-on overlapping-prefix cache | Cache-hit / cache-miss split | Thinking is the default mode |

| DeepSeek-V4-Flash | 1,000,000 | 384,000 | Cache-hit / cache-miss split | Default-on overlapping-prefix cache | Cache-hit / cache-miss split | Maps to legacy deepseek-chat / deepseek-reasoner until 24 July 2026 |

Numbers compiled from each provider’s official documentation as of 9 May 2026. See the Sources section for direct links.

Why token counts now vary more than they used to

The biggest reason token counts feel slippery in 2026 is that providers now change token behaviour at the model-version level. Anthropic says so directly for Opus 4.7 (up to 35% more tokens on the same text). OpenAI’s token-counting docs warn that model-specific behaviour can affect counts, including reasoning and caching. Google’s Gemini 3.1 Pro page even markets “improved token efficiency” — a reminder that providers are now optimising not only model quality but also how much internal or external token budget they need to finish a task.

A second reason is payload structure. OpenAI’s token-counting docs say local tokenizers are inadequate for images, files, tools and schemas. Anthropic’s docs show a simple “Hello, Claude” example at 14 input tokens, but a request with a modest weather tool schema at 403 tokens. Anthropic’s pricing docs also document tool-use system-prompt overhead, and OpenAI’s realtime docs note that “special tokens aside from the content of a message” can make a 10-token message count as 12. Developers who only count user-visible characters can still miss costs by a large margin in agentic or tool-heavy systems.

A third reason is multilingual and multimodal variance. SentencePiece was specifically designed to support language-independent subword training from raw sentences. DeepSeek quantifies how English and Chinese can produce different token-to-character ratios. Google extends the idea beyond text with token-per-image-tile, token-per-second-audio and token-per-second-video rules. Once you combine language with modalities and tool schemas, “1 token ≈ 4 characters” becomes what it always should have been: a rough English-prose heuristic, not a general law of LLM usage.

Strategies for managing tokens in 2026

The classic 2023 advice still applies, but it now needs a few 2026 upgrades:

- Cache aggressively. Move stable instructions, retrieval boilerplate and tool schemas to the very start of your prompt so prompt caching (OpenAI, Anthropic, Google, DeepSeek) can hit them.

- Treat reasoning tokens as a budget. On GPT-5.5, Claude 4.7 and Gemini 3, you pay for thinking. Expose a knob for “thinking effort” rather than letting it default for every call.

- Prefer one big in-context document over many roundtrips on million-token models — it is often cheaper than orchestrating small calls.

- Strip prior thinking blocks from history if your provider does not auto-strip them.

- Tile or downscale images deliberately. Knowing Gemini bills 258 tokens per 768×768 patch and Opus 4.7 can spend ~4,784 tokens on a single high-res image changes how you preprocess inputs.

- Always measure with the provider’s native counter before you ship pricing models or quotas.

- Pin tokenizer versions in tests. A vendor swap or a new tokenizer rev can move your bill 20–35% overnight.

How to count tokens accurately

For a 2026 article the most defensible recommendation is to skip generic estimators and link people to provider-native counting tools:

- OpenAI token-counting guide — exact counts for Responses payloads.

- Anthropic token-counting docs — supports Messages payloads with tools, images and PDFs.

- Gemini tokens docs — multimodal counting + structured usage metadata (input / output / thought / cached / tool).

- DeepSeek API docs — downloadable tokenizer package and returned usage metadata.

- OpenAI Tokenizer (web demo) and tiktoken — great for quick text-only experiments.

Estimate locally if you want; measure with provider-native counters if accuracy matters. That is the production-era message that most distinguishes 2026 from 2023.

Frequently asked questions

Sources

- OpenAI — API concepts & tokens

- OpenAI — Token-counting guide

- OpenAI — GPT-5.5 model docs

- OpenAI Help — What are tokens and how to count them

- Anthropic — Pricing (Claude Opus 4.7, Sonnet 4.6)

- Anthropic — Models (Opus 4.7 tokenizer change)

- Anthropic — Token counting (tools, images, PDFs)

- Google — Gemini 3.1 Pro Preview model page

- Google — Gemini tokens (multimodal counting + caching)

- Google — Gemini API changelog

- DeepSeek — Pricing & V4 cache-hit / cache-miss

- openai/tiktoken on GitHub

- Sennrich et al. 2015 — BPE for subword units

- Kudo & Richardson 2018 — SentencePiece

- Vaswani et al. 2017 — Attention Is All You Need

- Brown et al. 2020 — GPT-3 (autoregressive LM)

Verification note: this article cites provider documentation as of 9 May 2026. The space changes fast — Google retired Gemini 3 Pro Preview on 9 March 2026 and DeepSeek’s V4 Pro discount runs only until 31 May 2026 UTC — so always re-check the provider docs for current model names, windows and prices before going to production.